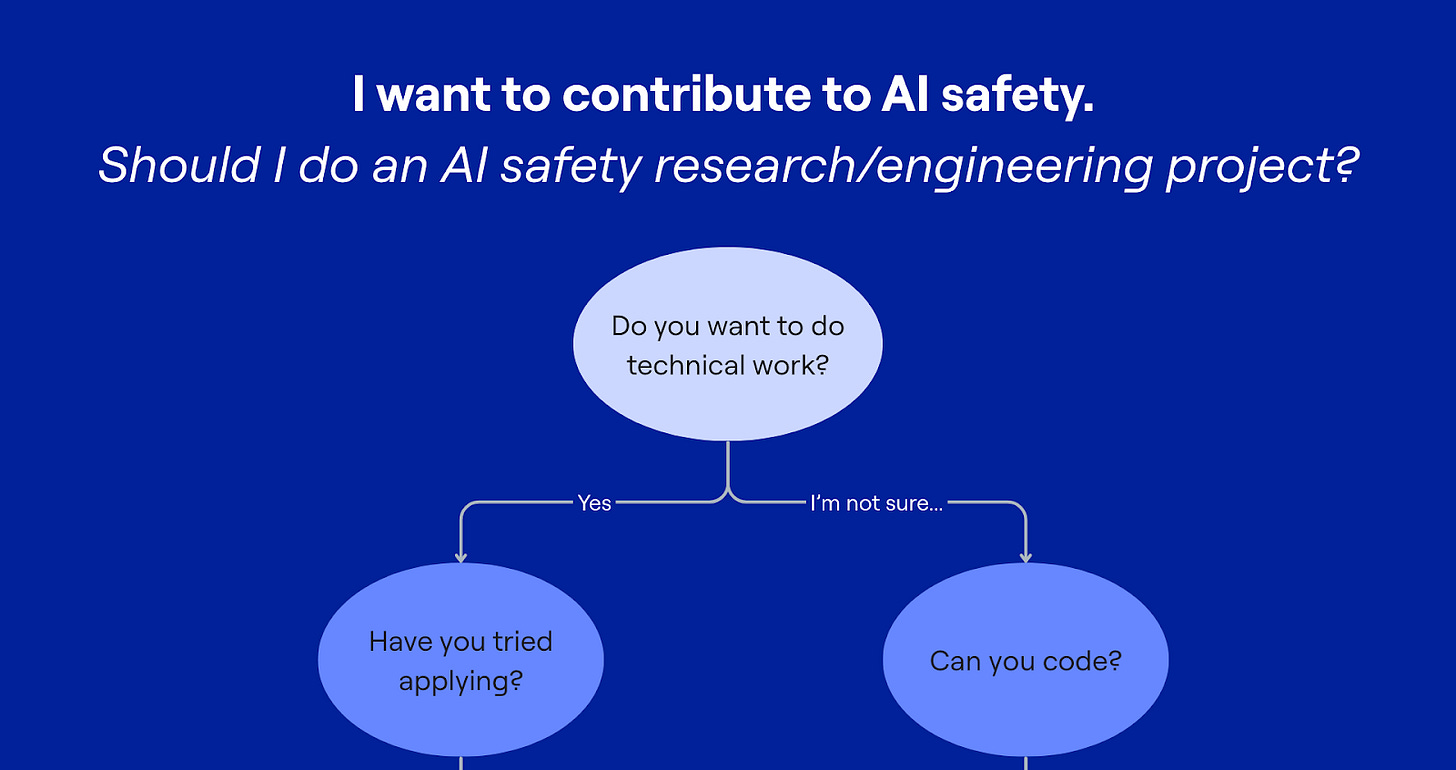

Should you do an AI safety research / engineering project?

Probably yes if you can code, but it also depends on how you plan to contribute to AI safety.

The short answer is probably yes if you can code.

The long answer depends on what you’re trying to do. Are you trying to figure out how you might contribute your technical skills to AI safety, or get opportunities to do it?

This blog post is written for graduates of the Technical AI Safety course who have spent 30 hours learning about current safety techniques, and the gaps to building safer AI.

What counts as ‘can code’?

I don’t mean you have to have professional software engineering experience. I mean that you at least feel comfortable independently completing a simple coding project.

This means things you should be able to:

Read and review code

Debug errors and figure things out when you get stuck

Write basic functions and loops

If that’s not you yet and you want to do a research / engineering project, you’ll want to build those skills first.

You should probably do a project if…

You’re applying for research and engineering roles, but not getting the roles you want. We’ve spoken to hiring managers from a variety of AI safety organisations including government, non-profits and frontier AI companies, who all tell us the same thing. An excellent project is one of the strongest hiring signals.

Most AI safety orgs are not credentialist, so having a project also puts you on more even footing, even if you don’t have years of professional software engineering or research experience. This is also the reason why there are so many AI safety research fellowships like ARENA, MATS, Pivotal, LASR, PIBBS.

Moreover, doing a project will give you some of the skills for technical interviews when applying.

You’re still figuring out how you want to contribute. While you could (and should!) get others’ takes on what technical work is like, just trying the thing can give you way more information than just reading or talking to people. It can be a cheap test for you to see for yourself what doing this work is like.

Before you devote time to upskilling, see for yourself what that work entails. You’ll surprise yourself by how much you can just jump in and learn as you go, rather than upskill generally.

You’re earlier in building your technical skills. Doing the real thing is one of the most effective ways to learn because it forces you to focus on the actual skills you need. Even if you don’t land a role immediately, you’ll be building your portfolio along the way.

You might not need this if…

You already have a strong portfolio. When I speak to hiring managers, and AI safety researchers and engineers, they recommend just applying because people often underestimate how experienced they are or overestimate how experienced they need to be for the role.

They’ve also said that you’re not penalised for reapplying. Just make sure to highlight what’s changed since your last application. In fact, this is often a strong signal of how high agency you’ve been in upskilling since then.

If you already have a strong portfolio or technical skills, apply first. Do a project later.

This probably isn’t for you if…

You’re doing this because you think it’s the only way to contribute. It’s not.

Especially if you’re several years into your career, you’ve racked up expertise that others are spending months trying to acquire. Leverage that!

Look for areas where you can contribute your unique skills and experience. You can read 80,000 Hours career review or check out our AGI Strategy course to learn about other pathways. There are many high-impact paths that don’t require touching code.

So you’ve decided to do a project

You can start right now by following our Technical AI Safety Project sprint.

You can get a richer experience by applying, where we’ll provide:

Mentorship: Having someone familiar with AI safety can guide your project direction with how to pick and scope your project, what tools to use, and who to talk to.

Accountability: Setting goals is hard, sticking to them is even harder. Working on this with someone else will be a major boost!

Rapid feedback: A point person to review your work as you go, so you can iterate faster.

Whether you follow the guide independently or apply to join a cohort, we’re excited to see what you come up with! Tag @BlueDotImpact on LinkedIn or Twitter with your project.

This really resonates. What you’re describing here is basically a signal problem, not necessarily a skills problem.

A lot of people have taken strong AI safety / technical courses, can code, and genuinely want to contribute — but they’re stuck because they lack credible, real-world proof-of-work in high-trust settings.

I’m currently working on a concept called Skills4Impact that tries to address exactly this gap: creating a structured pathway where early-career talent works on real, non-urgent backlog projects from mission-driven orgs, produces concrete artifacts (code, reports, tools), and gets formal verification/reference — not as “volunteering” or consulting, but as a way to turn learning into legible signal.

If this problem resonates with you (or if you’ve seen it from the org side), I’ve linked a draft concept note here and would really value critique, pushback, or suggestions. Link to draft: https://docs.google.com/document/d/1r7Nn5O4rEesQRwNP8InKg6LMBrEIzg5P_fYdHig9miA/edit?usp=sharing